The Top 5 Free Containerization Tools

Introduction

In 2006, several Google engineers started to work on a Linux kernel feature called Cgroups (Control Groups) to limit and isolate hardware resource usage (e.g., RAM, CPU, disk I/O, network, etc.). Eventually, this functionality was merged with the Linux Mainline Kernel in 2008, and that paved the way for all containerization technologies that we use today, like Docker, Cloudfoundry, LXC, etc.

Since 2013, the concept of containerization has become a significant trend in various fields, like web hosting and software development. It is both an alternative and a companion to traditional technology called virtualization. As hardware-level virtualization has to run an entire guest operating system, it can be very resource-intensive. Alternatively, containers essentially share the host machine’s kernel, which means that hardware resources are not directed to run various, separate operating system tasks. But overall, containers enable faster and more secure application deployment.

In today’s article, we will cover the top five free containerization tools to manage your container software, listed in order based on the number of users per Stackshare.

Kubernetes

Kubernetes is by far the most used containerization tool. It is an open-source container-orchestration software and is used to manage various containerized applications. Google initially developed Kubernetes but donated it to Cloud Native Computing Foundation (a collaboration between Google and Linux Foundation focused on advancing container technologies) soon after its initial release.

Containerization has increased in popularity. Due to this, it is more challenging to manage large volumes of containerized applications. Kubernetes enables us to manage and scale big clusters efficiently and provides advantageous features like load balancing, automation of application rollouts and rollbacks, storage orchestration, storage and management of sensitive information, and self-healing (self-monitoring).

A Kubernetes cluster contains a set of worker nodes running containerized applications and a control plane to organize and manage the cluster. Every Kubernetes cluster needs to have at least one node.

We can host Pods on each node. Pods are the smallest computing unit that we can deploy and manage in Kubernetes and are components of our applications. An API server at the heart of the control plane enables communication between users, units on the cluster, and external components.

Docker Compose

Docker Compose is a Docker tool that enables configuration, management, and the running of multi-container Docker applications. It allows us to create and start services, as well as define and apply various rules stored in the docker-compose.yaml config file.

Even though the tool was designed to apply configuration files and avoid running various shell scripts manually, there are a few basic steps:

- Define the environment of our application with Dockerfile.

- Use the docker-compose.yaml configuration file to define services in our application.

- Use the docker-compose up command to start our app and services all at once.

There are several advantages of Docker Compose, such as easy and fast configuration via YAML, deployment on a single host, good security, versatility. Docker Compose is used in various application environments (production, staging, development, testing).

The Docker Compose tool can run on Windows, MacOS, and 64-bit Linux operating systems. All of the listed OSs require Docker Engine to utilize the full potential of Docker Compose. Docker Engine can be installed on your local machine or a remote server.

Helm

Helm is a Kubernetes package manager that packages, configures, and deploys various apps and services into Kubernetes clusters. Simply put, Helm is what apt is for Ubuntu, and yum is for CentOS.

Helm has several different purposes, such as creating and sharing applications (Helm Charts) and managing releases in Kubernetes, creating builds of Kubernetes applications, and managing Kubernetes manifest files.

At the heart of Helm, we have the previously mentioned Helm Charts. They are a combination of various files that define a specific Kubernetes resource, as shown below. This collection of files resides inside one directory for a single chart. The charts are used for simple tasks (installing WordPress on Kubernetes) and complex deployments (full stack web applications).

testchart/

charts/ - dir containing charts upon which our current chart depends on

crds/ -- dir for custom resource definitions

templates/ -- dir with templates that can generate Kubernetes manifest files

Chart.yaml -- necessary yaml file that contains information about the chart

values.yaml -- yaml file for defining default config values for this chart

LICENSE -- optional file to define a license for the chart

README.md -- optional human readable README fileEvery chart has a descriptor YAML file called Chart.yaml and one or more Kubernetes manifest files (templates). The Chart.yaml file can contain various fields that must be defined:

- apiVersion: The chart API version.

- Name: The chart name.

- Version: SemVer2 version.

There are plenty of already existing charts ready for deployment using various applications, but if you want to create your own Helm Chart, follow the command below.

helm create testchartThis command will automatically create a testchart directory with the files we defined (listed above), and you can start customizing the chart by editing them according to your needs.

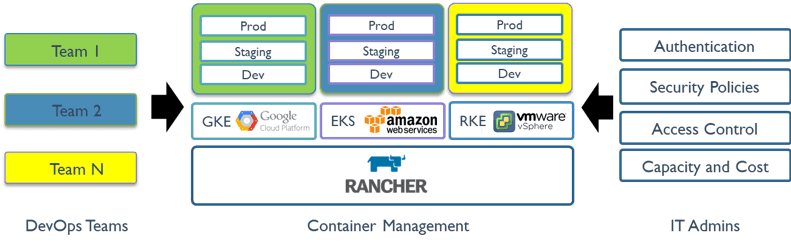

Rancher

Rancher is an open-source cloud orchestration and cluster management tool that enables deployment and the running and managing of containers in production. It essentially simplifies operating container clusters on a cloud or infrastructure platform of your choice.

Rancher provides its users with several cluster deployment choices. Users can:

- Create Kubernetes clusters with RKE (Rancher Kubernetes Engine) on bare-metal servers.

- Import, configure, and manage their pre-made Kubernetes clusters.

- Utilize various cloud services from other infrastructure providers such as Google Kubernetes Engine (GKE), Amazon Kubernetes Engine (AKS), or Amazon Elastic Kubernetes Service (EKS).

It is worth noting that the Rancher server can be installed on a Kubernetes cluster(s) or a single node (a Docker container), but the nodes must meet certain requirements that vary by the size of the Rancher deployment and different use case scenarios.

The Rancher team has several best practice recommendations to ensure good stability and performance:

- Install Rancher on a separate cluster that is not running other processes or services on the Kubernetes cluster.

- Back up the Statefile. It is vital for cluster maintenance and recovery.

- Optimize the nodes for Kubernetes by disabling swap, ensuring good network connectivity, and adjusting correct network ports.

- Deploy nodes in the cluster in the same data center location to ensure high performance of the nodes and cluster as a whole.

Docker Swarm

Docker Swarm is a container orchestration tool that can run on various Docker applications and enables its users to create, deploy, and manage multiple containers (which make a Docker cluster) on one or multiple host machines. In the Docker cluster, all processes are controlled by a swarm manager, whereas individual nodes (Docker daemons) interact using the Docker API.

There are several valuable features of Docker Swarm:

- Decentralized access, enabling various users and teams to access and manage the cluster.

- A good level of security.

- Load balancing.

- Multi-host networking. Addresses are assigned to containers on the network when there is an update to the cluster.

- Environment roll-backs. If there was an update issue, we could reverse the problem by rolling back to a previous version.

- Scalability.

Docker Swarm is a comparable alternative to Kubernetes. Where Kubernetes uses Kubelets, Docker Swarm uses Docker daemons on specific nodes. Docker controls nodes to create clusters, while Kubernetes uses Kubernetes Engine. While Kubernetes has built-in monitoring and auto-scaling, Docker Swarm has automatic load balancing, a command-line interface directly integrated, is easier to install and use, and is more lightweight.

Conclusion

In today’s article, we looked at the top five most used containerization tools. Ever since the introduction of the concept of containerization in 2013, the technology has vastly improved and extraordinarily sped up the workflow of application environments and their deployment.

If you plan on building your containerized infrastructure, Liquid Web has many available hosting solutions, including options for Dedicated, VPS, Private Cloud, and Server Clusters. Reach out to our Most Helpful Humans In Hosting® to learn more about setup customization options today!

Related Articles:

- Change cPanel password from WebHost Manager (WHM)

- Blocking IP or whitelisting IP addresses with UFW

- Fail2Ban install tutorial for Linux (AlmaLinux)

- How to set up NGINX virtual hosts (server blocks) on AlmaLinux

- Integrating Cloudflare Access with a Bitwarden instance

- How to install Yarn on Linux (AlmaLinux)

About the Author: Thomas Janson

Thomas Janson joined Liquid Web's Operations team in 2019. When he is not behind the keyboard, he enjoys reading books, financial statements, playing tennis, and spending time outdoors.

Our Sales and Support teams are available 24 hours by phone or e-mail to assist.

Latest Articles

Change cPanel password from WebHost Manager (WHM)

Read ArticleChange cPanel password from WebHost Manager (WHM)

Read ArticleChange cPanel password from WebHost Manager (WHM)

Read ArticleChange cPanel password from WebHost Manager (WHM)

Read ArticleChange the root password in WebHost Manager (WHM)

Read Article